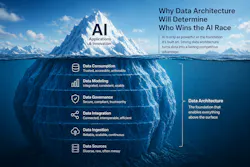

Why Data Architecture Will Determine Who Wins the AI Race

Key Highlights

- Many organizations are rushing AI deployment without fully understanding their data environments, leading to operational and security risks.

- Legacy IT architectures are ill-equipped for modern interconnected systems, making data discovery, control and governance more challenging.

- The convergence of OT and IT expands attack surfaces, requiring enhanced security measures and greater visibility into connected systems.

- AI governance is lagging, with organizations prioritizing speed over security, which could lead to high-impact failures.

- Proactive planning around data architecture, governance and security is essential before scaling AI to avoid future crises.

It’s a problem: Enterprise investment in AI is accelerating at a pace where few organizations are structurally prepared to support. While leaders are focused on use cases, productivity gains, and competitive advantage, many are overlooking a more fundamental requirement: the data architecture, governance, and security foundation needed to make AI work at scale. Without it, even the most advanced tools risk amplifying inefficiencies, exposing vulnerabilities, and delivering unreliable outcomes.

According to Pierre Bourgeix, CEO of ESI Convergent, the disconnect is both widespread and growing. Drawing on decades of experience across physical security, cybersecurity and enterprise infrastructure, Bourgeix sees organizations rushing to deploy AI on top of fragmented, poorly understood data environments. The result is not just underperformance — it’s a new layer of operational and security risk that many executives have yet to fully grasp.

Why AI strategy depends on data architecture readiness

Enterprise investment in AI is accelerating at a pace few organizations are structurally prepared to support. While leaders focus on use cases, productivity gains and competitive advantage, a more fundamental issue is emerging beneath the surface: Most organizations don’t fully understand the data environments they’re asking AI to operate within. That gap between AI ambition and data reality is quickly becoming one of the defining risks of the current AI cycle.

“If you don’t understand where your data lives, what it is, and who has access to it, you shouldn’t be layering AI on top of it,” says Pierre Bourgeix.

How fragmented data environments increase AI risk

At a technical level, many organizations are still operating on architectures designed 15 to 20 years ago. These environments were built for a different era of IT — one defined by structured systems, known boundaries, and relatively predictable data flows.

Today, those assumptions no longer hold.

Operational Technology (OT) — the systems that run physical infrastructure, from manufacturing equipment to building controls — is now deeply interconnected with IT systems. At the same time, AI is being layered across both environments, pulling from data sources that are often poorly cataloged and loosely governed.

OT versus IT

Operational Technology (OT) runs the physical world: Machines, infrastructure and industrial processes, where uptime and safety are critical.

Information Technology (IT) runs the digital world: data, systems and applications, where confidentiality and integrity are the priorities.

As these environments converge, the gap between how they operate and how they’re secured becomes a growing risk.

The result is a fragmented, highly interconnected ecosystem where visibility is limited and control is inconsistent.

“This isn’t just a technology problem; it’s a cascading systems problem across IT, operational technology, and data,” Bourgeix explains.

In practice, that fragmentation shows up in predictable ways. Organizations lack clear data discovery processes. They don’t know what data they have, where it resides or how it’s being used. Access controls are inconsistent. And OT systems — often managed outside of IT — introduce additional data flows that are rarely accounted for in enterprise architecture.

AI doesn’t solve these problems. It exposes them.

Why data architecture is the real AI competitive advantage

Much of the current AI conversation centers on models, tools and applications. But Bourgeix argues that the real differentiator will be far less visible — and far more foundational.

“When we say data architecture will determine who wins the AI race, it’s not just technical," he says. "It’s operational and strategic.”

Organizations that succeed will be those that can:

- Understand and classify their data

- Control how that data moves across systems

- Ensure data integrity before it feeds AI models

- Align architecture with long-term business and risk priorities

Without that foundation, even the most advanced AI capabilities become unreliable.

“If the data is wrong, everything built on top of it is wrong," Bourgeix says. "That includes your decisions.”

That shift reframes AI from a tool problem to a systems problem that spans infrastructure, governance and leadership alignment.

How OT and IT convergence expands AI cybersecurity risk

The convergence of OT and IT is also reshaping the cybersecurity landscape in ways many organizations have not fully absorbed.

Operational Technology (OT) runs the physical world — machines, infrastructure and industrial processes — where uptime and safety are critical. Information Technology (IT) runs the digital world — data, systems and applications — where confidentiality and integrity are the priority. As these environments converge, so do their risks.

Historically, OT systems operated in relative isolation. Today, they are increasingly connected to enterprise networks and cloud environments, often without the segmentation or controls needed to secure them.

“There’s no effective way to secure all that data if you don’t even know what’s connected,” Bourgeix says.

At the same time, adversaries are evolving. AI is now being used to automate attacks, scale fraud and exploit vulnerabilities across interconnected systems.

“Adversarial AI is already here. It’s being used at scale — it’s just not widely understood yet," Bourgeix cautions.

That combination of an expanded attack surface and more sophisticated threats creates a level of systemic exposure that traditional security models were never designed to handle.

Why AI governance lags behind enterprise adoption

Despite growing awareness of these risks, governance remains inconsistent. Frameworks from organizations like NIST (The National Institute of Standards and Technology) are beginning to take shape, and regulatory efforts are advancing globally. But there is still no unified model for how AI should be governed at scale.

Part of the challenge is structural. Part of it is behavioral.

“Organizations don’t avoid security because they don’t care. They avoid it because it creates friction,” Bourgeix notes.

Security slows things down. It introduces complexity. And in environments where speed, efficiency and user experience are prioritized, those tradeoffs are often rejected. The result is a consistent pattern: Organizations adopt AI quickly, but delay the harder work of building governance, segmentation and control.

“We’ve prioritized convenience over control, and that tradeoff is now catching up with us.”

What CEOs and CIOs should do before scaling AI

The path forward is less about adopting more AI and more about asking better questions. Bourgeix points to three immediate priorities:

- Define the AI strategy. Not how AI is being used, but why. What specific business problems does it solve, and what value does it create?

- Gain an understanding of the current data environment and infrastructure. Where does data live? How is it structured? Who has access? Without that baseline, any AI initiative is operating in the dark.

- Design the future architecture deliberately. That includes data governance, identity management, segmentation and security controls that align with how AI will actually be used. It’s not just about having data; it’s about having the right data, structured correctly, and protected throughout its life cycle.

In many cases, that may require building new environments in parallel rather than retrofitting legacy systems.

“Trying to retrofit existing systems can be extremely difficult," Bourgeix says. "They were never designed for this level of complexity.”

Why an AI-driven security incident could force a governance reset

The current trajectory is unlikely to continue unchanged.

As AI adoption accelerates and systems become more interconnected, the probability of a high-impact failure increases. Whether through compromised data, automated attacks or decisions based on flawed outputs, a defining incident is increasingly likely.

“We are very close to a moment where something happens that forces organizations to take this seriously,” Bourgeix says.

That moment will not be about the failure of AI itself, but about the systems and decisions surrounding it.

“It’s not the AI that’s going to hurt you. It’s how you use it, and how vulnerable you’ve made yourself in the process,” he notes.

For organizations willing to address those vulnerabilities now, the opportunity remains significant. But the window to do so proactively is narrowing.

Download: An Executive Playbook for AI-Ready Data Architecture

Download the Executive Playbook for AI-Ready Data Architecture today to get started!

TL;DR | Summary

1. Most organizations are building AI on an unstable foundation.

AI adoption is accelerating faster than the underlying infrastructure, data architecture, and governance models needed to support it. Many organizations lack basic visibility into where their data lives, how it’s structured and who has access to it, creating significant risk before AI even enters the equation.

2. The real risk isn’t AI. It’s how organizations are using it.

AI itself is not inherently dangerous, but deploying it without clear data controls, segmentation between IT and operational systems, and defined governance introduces systemic vulnerabilities. Poor data integrity and unclear architecture can lead to flawed outputs, misinformed decisions and exposure to manipulation.

3. Security is being deprioritized in favor of speed and convenience.

Organizations consistently avoid implementing robust security measures because they introduce friction. Leadership often prioritizes ease of use and business velocity over long-term risk mitigation, despite growing exposure to adversarial AI, ransomware and data exploitation.

4. A forced inflection point is coming.

Governance and best practices are still catching up to real-world AI use. Without proactive action, a significant AI-driven incident, likely tied to misinformation, compromised data or automated attacks, will force organizations to rethink their approach to infrastructure, security and oversight.

About the Author

Jess Mand

Contributor

Jess Mand is an award-winning communications strategist and founder of INDEMAND Communications, where she helps organizations translate complex ideas into clear, compelling narratives that drive connection and action. She partners with Fortune 500 companies, growth-stage firms, and mission-driven organizations to design communication strategies, content programs, and experiential campaigns that engage employees and elevate leadership messages. Known for her creative storytelling and pragmatic approach, Jess brings a rare blend of strategic insight and human-centered perspective to every project she leads.

Resources

Resources

Quiz

Quiz

Make smart decisions faster with ExecutiveEDGE’s weekly newsletter. It delivers leadership insights, economic trends, and forward-thinking strategies. Gain perspectives from today’s top business minds and stay informed on innovations shaping tomorrow’s business landscape.